It's our job to prevent website downtime, but we've all been there: "Error 521: Server Timeout", "This site can't be reached", "Cannot find the server specified". These are never good messages to see. It's bad enough when you push an update to the server to refresh the page and see one of these messages. But it's even worse when you wake up and see one of your core providers is suffering a major issue. When it is out of your control, you are now at the mercy of someone else's doing and you have no other option but to just wait it out (worst feeling ever!).

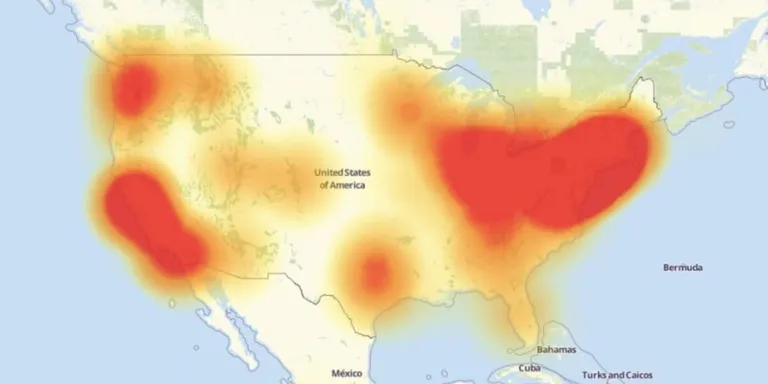

The entire Internet was greatly put to the test this last October when DynDNS suffered a massive DDoS attack -- crippling thousands of websites. No matter how big or small your website is these principles will apply to you.

Understand the big picture

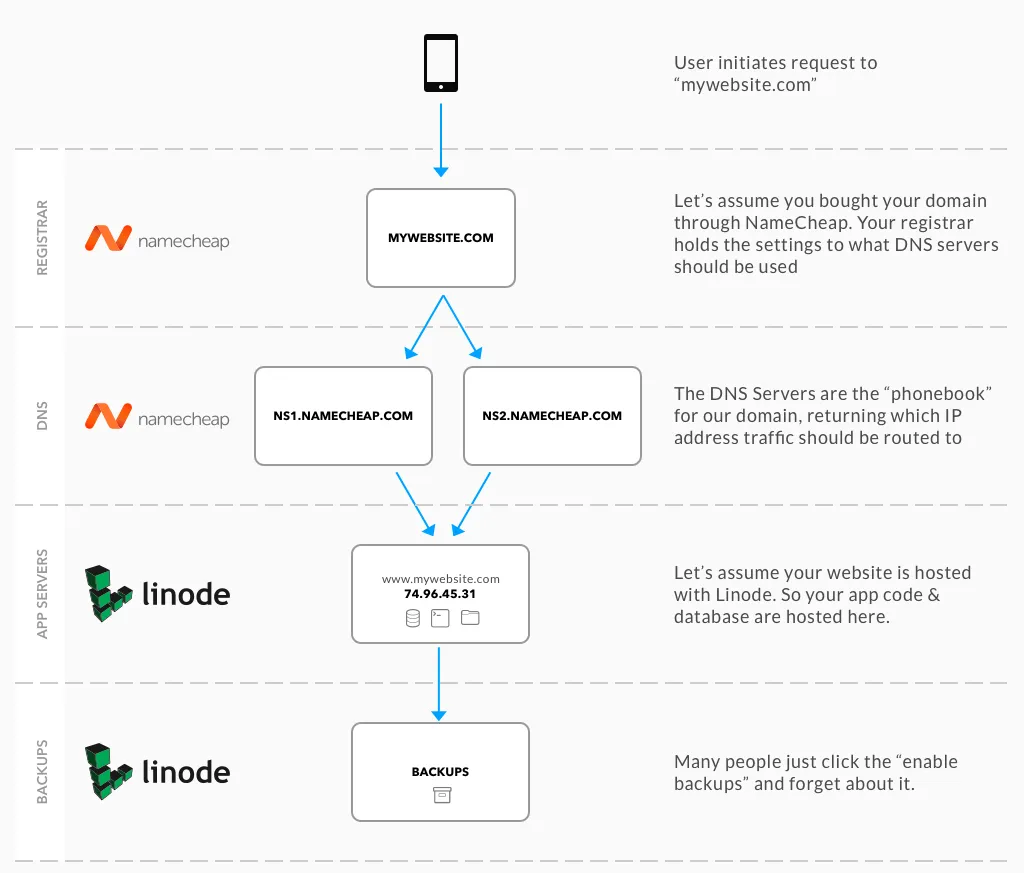

All websites have these four major components in order for a website to load:

- Domain Registrar

- DNS Server

- Application & Database Servers

- Backups (some people skip this one)

What is confusing to most people, a lot of these services are bundled through one provider. This is where things will get nasty. Here is what you'll usually find on the internet:

Looking at this set up above, this configuration has two points of failure:

- If NameCheap goes down, all users are not able to resolve "mywebsite.com". I also lose my ability to make changes to any DNS settings

- If Linode goes down, all users are not able to visit my website. I also lose access to all of my data. I can't even get a backup and restore it to a new provider like Digital Ocean.

These types of failures are all too real. In January 2016, Linode suffered a massive DDoS attack. Our own systems were affected by it and even Taylor Otwell's Envoyer service:

Also of note: Linode's backups don't help you when they can only be restored back into same region that is down :|

— Taylor Otwell (@taylorotwell) January 2, 2016

Luckily, there are very inexpensive strategies that you can use to prevent this from happening.

Don't put all of your eggs in one basket

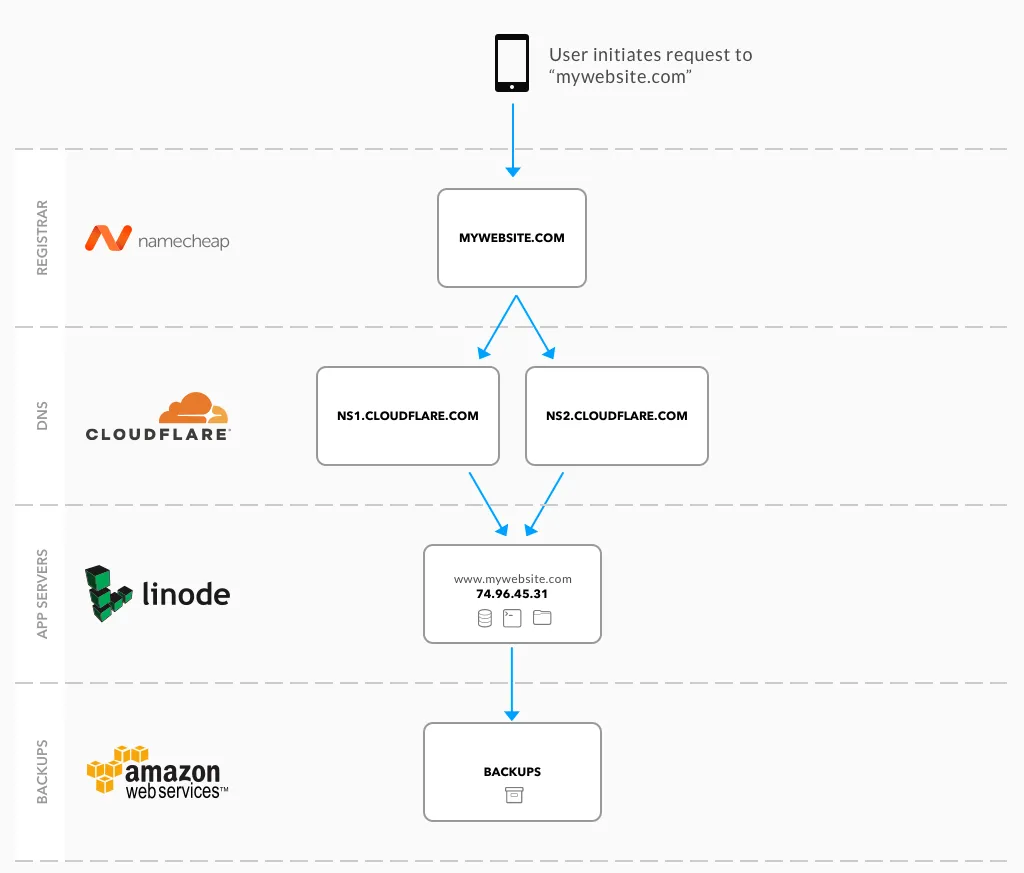

To maximize your control and your access to your website's data, make sure you spread the responsibility of services across many different providers. Looking at the situation above, here is how we can quickly achieve this:

By separating these services, I now have a lot of control and accessibility to my website compared to before:

- If NameCheap goes down: My website will still load and function normally. I just can't switch DNS servers (which is not the end of the world if it is temporary).

- If CloudFlare goes down: My website will go down, but I can still migrate DNS to a different provider if needed by logging into NameCheap and switching DNS servers

- If Linode goes down: My website will go down, but I can still get a copy of the backup off of Amazon S3. I can then redeploy the server to Digital Ocean and update the DNS in CloudFlare to point to the new IP address

- If Amazon goes down: My website will still load and function normally. I just lose my daily backups being stored offsite.

Nothing will ever be 100% uptime, but spreading the access of these 4 major functions makes the wounds hurt a lot less.

Don't over complicate things

There are lots of other methods that you can use to minimize downtime (load balancing servers, multiple DNS servers, etc.) but remember: the more stuff you add the more complicated it will be to manage your day to day tasks. For some sites it is worth to use these more advanced methods, but the structure that I have above is available to everyone at very little cost without expensive licensing. You can get this entire setup for under $100/year -- which is cheaper than most BlueHost packages.

What do you use to prevent downtime? Any strategies or horror stories? Feel free to drop a comment below or reach out to me on Twitter!

Want to work together?

Professional developers choose Server Side Up to ship quality applications without surrendering control. Explore our tools and resources or work directly with us.

Join our community

We're a community of 3,000+ members help each other level up our development skills.

Platinum Sponsors

Active Discord Members

We help each other through the challenges and share our knowledge when we learn something cool.

Stars on GitHub

Our community is active and growing.

Newsletter Subscribers

We send periodic updates what we're learning and what new tools are available. No spam. No BS.

Sign up for our newsletter

Be the first to know about our latest releases and product updates.